Pattern Essence

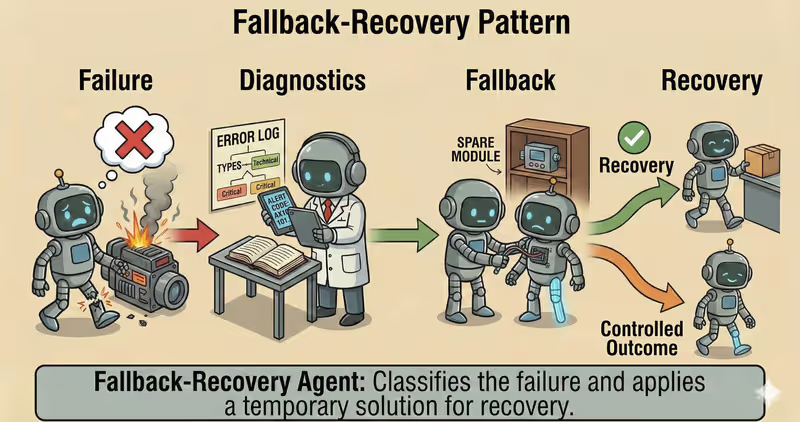

Fallback-Recovery Agent is a pattern where an agent does not just terminate on error, but goes through a controlled recovery process: classifies the failure, applies a fallback, and attempts to resume execution.

When to use it: when it is important not to crash on the first error, but to recover execution through a controlled scenario.

In real systems, failures are inevitable:

- external API timeouts

- temporary tool unavailability

- output validation errors

- partial dependency outages

The Fallback-Recovery approach turns "error = stop" into "error = controlled recovery scenario".

Problem

Imagine an agent prepares a daily report for a client:

- read metrics from an API

- build a table

- send the result

At step two, the API returns timeout.

Without recovery logic, the workflow simply stops.

One local error should not break the entire process if the remaining steps are still operable.

What you get:

- a missed deadline

- lost intermediate progress

- manual restart from zero

- unpredictable behavior in production

That is the core issue: without a recovery strategy, even a single failure breaks the whole scenario.

Solution

Fallback-Recovery introduces a recovery policy for controlled post-failure recovery.

Analogy: this is like autosave in an editor. If the app crashes, you do not start from scratch, you continue from the last safe state. The same logic applies here, but with explicit boundaries.

Key principle: not every error should be "hard-stopped". Some errors should be classified and recovered safely.

The agent may suggest retry, but the execution layer decides:

- whether

retryis allowed - whether

fallbackis required - whether

escalation/stopis needed

Controlled process:

- Detect: record the failure

- Classify: determine error type

- Decide:

retry/fallback/escalation - Recover: continue from checkpoint

- End safely: stop with a clear

stop_reason

This gives you:

- recovery of long-running processes after temporary failures

- graceful degradation (

cached/partial result) - no duplication of already successful steps

- a transparent stop reason

Works well if:

max_retriesandmax_fallbackslimits exist- checkpoint is saved after safe progress

- classification separates

retriable/non-retriable - high-risk cases are not auto-recovered

The model may "want" to retry infinitely, but the recovery-policy defines safe recovery boundaries.

How It Works

Critical: recovery must have boundaries.

max_retriesandmax_fallbacksstep_timeoutandtotal_timeoutstop_reasonfor every exit- block “fallback -> retry -> fallback” without a counter

Full flow description: Detect → Classify → Recover → Resume/Stop

Detect

The system records a failure: timeout, tool error, invalid output, or policy violation.

Classify

The failure is classified by type: retriable, tool_unavailable, invalid_output, non_retriable, high_risk.

Recover

Apply policy: retry with backoff, fallback to another tool, degrade mode (partial result / cached data), or escalation to a human.

Resume/Stop

If recovery succeeds, continue from the last checkpoint. If not, stop in a controlled way.

In Code It Looks Like This

fallbacks_used = 0

for attempt in range(max_retries + 1):

try:

result = run_step(goal, context, timeout_sec=step_timeout)

checkpoint.save(task_id, context, result)

return result

except TimeoutError as err:

kind = "retriable"

except ToolUnavailableError as err:

kind = "tool_unavailable"

except ValidationError as err:

kind = "invalid_output"

except Exception as err:

kind = classify_error(err)

if kind == "retriable" and attempt < max_retries:

sleep(backoff(attempt))

continue

if kind == "tool_unavailable" and fallbacks_used < max_fallbacks:

fallbacks_used += 1

context.append(f"fallback_used={fallbacks_used}")

context.append("route=secondary_tool") # or alt_model / cached_path

continue

if kind == "high_risk":

return escalate_to_human(goal, err, stop_reason="high_risk")

return stop_with_reason(goal, stop_reason=kind, detail=str(err))

Save checkpoints after a successful step or after safe partial progress (idempotent state). Otherwise retry can duplicate actions.

What This Looks Like During Execution

Goal: prepare a client report

Step 1: collect metrics

- timeout in primary analytics API

- classify: retriable

- retry #1 -> fail

- retry #2 -> fail

Fallback:

- switch to read-replica API

- success

Resume:

- report assembled

- step completed without full process failure

Full Fallback-Recovery agent example

When It Fits - and When It Does Not

Good Fit

| Situation | Why Recovery Fits | |

|---|---|---|

| ✅ | Unstable external tools and flaky APIs/tooling | Fallback routes and retries let you survive temporary failures without total process collapse. |

| ✅ | Long tasks where progress must not be lost | Checkpoint and resume let you recover from the last stable step. |

| ✅ | SLA/SLO requirements for process resilience | A recovery loop helps meet availability and reliability targets. |

| ✅ | You need explicit stop reasons instead of silent fail | The pattern formalizes stop causes and improves failure observability. |

Not a Good Fit

| Situation | Why Recovery Does Not Fit | |

|---|---|---|

| ❌ | One-off scenario where failure is not critical | A complex recovery layer costs more than the potential benefit. |

| ❌ | Retry/fallback scenarios are forbidden by business rules | There are no allowed recovery paths, so the pattern is not applicable. |

| ❌ | No checkpoint/state management | Technically, you cannot recover progress correctly after failure. |

Because a recovery pattern adds operational complexity: error logic, state handling, and maintenance overhead.

How It Differs from Supervisor

| Supervisor | Fallback-Recovery | |

|---|---|---|

| When it triggers | Before an action executes | After a failure or error |

| Main role | policy control and risk limitation | execution resilience and recovery |

| Decision types | approve / revise / block / escalate | retry / fallback / resume / stop |

| Key value | Prevent unsafe actions | Keep the process from collapsing on errors |

Supervisor is prevention. Fallback-Recovery is post-failure restoration.

When to Use Fallback-Recovery (vs Other Patterns)

Use Fallback-Recovery when you need to restore execution after failures instead of collapsing the whole process.

Quick test:

- if you need "retry/fallback/escalation after an error" -> Fallback-Recovery

- if you need "stop a risky action before execution" -> Guarded-Policy Agent

Comparison with other patterns and examples

Quick cheatsheet:

| If the task looks like this... | Use |

|---|---|

| You need a quick check before the final answer | Reflection Agent |

| You need deep criteria-based critique and answer rewriting | Self-Critique Agent |

| You need to recover process flow after timeout, exception, or tool crash | Fallback-Recovery Agent |

| You need strict policy checks before a risky action | Guarded-Policy Agent |

Examples:

Reflection: "Before the final response, quickly check logic, completeness, and obvious mistakes."

Self-Critique: "Evaluate the response with a checklist (accuracy, completeness, risks), then rewrite."

Fallback-Recovery: "If API does not respond, do retry -> fallback source -> escalation."

Guarded-Policy: "Before sending data outside, run a policy check: is this action allowed?"

Not sure whether your scenario already needs fallback and recovery paths? Design Your Agent →

How to Combine with Other Patterns

- Fallback-Recovery + ReAct: if failure happens mid-loop, the agent retries only the failing step instead of restarting from zero.

- Fallback-Recovery + Orchestrator: in parallel execution, only the broken branch recovers while other subtasks continue.

- Fallback-Recovery + Supervisor: policies are checked before recovery so fallback does not violate safety rules.

In Short

Fallback-Recovery Agent:

- Detects and classifies failures

- Applies

retry/fallbackpolicies - Returns to execution through checkpoint

- Stops in a controlled way if recovery is impossible

Pros and Cons

Pros

recovers quickly after failures

reduces service downtime

keeps process stable during errors

makes critical scenarios easier to control

Cons

fallback scenarios must be designed in advance

additional logic increases system complexity

not every failure can be recovered automatically

FAQ

Q: Is adding retries alone enough?

A: No. The minimum safe set is max_retries + backoff + step_timeout + stop_reason. Without this, retries become a budget-burning loop.

Q: When is fallback better than retry?

A: When the failure is systemic: tool unavailable, quota exhausted, or endpoint degraded.

Q: Why do we need checkpoint if we already have fallback?

A: Fallback changes the execution path, but a checkpoint preserves progress so you do not rerun the whole scenario from the beginning.

What Next

Fallback-Recovery adds failure resilience.

But how do you make sure risky actions are never started without policy checks?