Pattern Essence

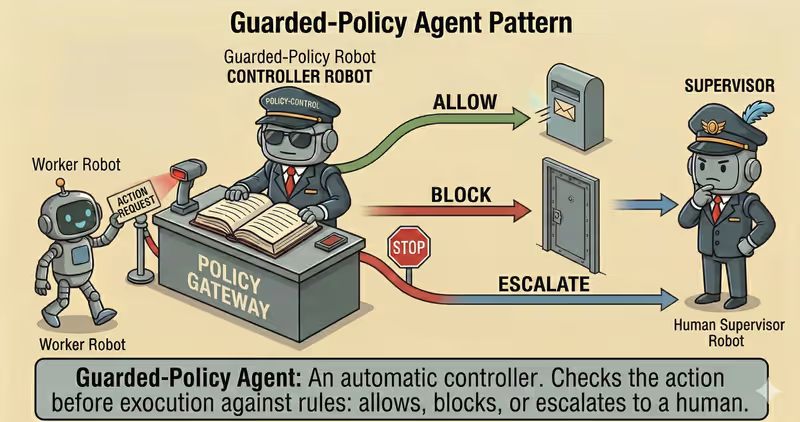

Guarded-Policy Agent is a pattern where before every action you apply a policy-gate: allow, deny, rewrite, or escalate based on formalized rules.

When to use it: when agent actions must pass formal rule checks before execution.

The idea is simple: the agent may propose anything, but only steps that pass policy checks are executed.

Policy guardrails usually check:

- allowed tools and parameters

- data access boundaries

budget/timelimits- action risk level

Problem

Imagine a banking scenario: you need to transfer 100$, but someone accidentally enters 10,000$.

If the system without checks simply says "execute", that action goes to prod.

Even when:

- the customer does not have that balance

- the amount exceeds the role limit

- the destination account is external and needs extra checks

Without a technical policy gate, the agent can execute a dangerous action even when it is obviously not supposed to pass.

That is the problem: without constraints, any action can be executed, even if it is:

- dangerous

- too expensive

- violating access rules

Solution

Guarded-Policy Agent introduces mandatory checks before every action.

Analogy: this is like a turnstile with access control. Even if a person wants to pass, permissions and rules are checked first. Without approval, the system simply does not let the action proceed.

Core principle: the model may propose any step, but only steps that pass the policy gate are executed.

Every action goes through:

- permission checks

- budget/limit checks

- data access checks

- risk assessment

After that, policy-engine returns a decision:

- allow (

allow) - execute - rewrite (

rewrite) - replace with a safe variant - deny (

deny) - block - escalate (

escalate) - hand over to a human

This protects against cases where the agent can:

- write instead of read

- exfiltrate sensitive data

- run an expensive query

- perform a destructive operation

Works well if:

- the agent does not have direct access to tools

- execution can happen only through

policy-engine - every action must pass

allow/deny/rewrite/escalate

Agent reliability is not just "good intent", but actions it is technically unable to execute outside policy.

How It Works

Policy-gate does not execute the action itself. It decides whether the action may be executed and in what form.

Full flow: Propose → Check Policy → Enforce → Execute/Block

Propose Action

The agent forms intent: which tool, with which args, and why this step.

Check Policy

Policy checks intent: allowlist/blocklist, access scope, budget limits, runtime state (quota, spend), data sensitivity.

Enforce Decision

Policy-engine returns an enforcement decision: allow, deny, rewrite, or escalate.

Execute/Block

The system either executes the action or stops the flow with a transparent stop reason.

In Code, It Looks Like This

action = agent.next_action(context)

decision = policy_engine.evaluate(

action=action,

user_role=user_role,

budget_state=budget_state,

)

if decision.type == "allow":

result = execute(action)

elif decision.type == "rewrite":

context.append(decision.reason)

return agent.next_action(context) # propose again through the same gate

elif decision.type == "escalate":

result = human_approval(action)

else:

result = stop_with_reason(decision.reason)

return result

Core principle: agent intent and execution are different layers. Policy sits between intent and execution (execution).

The model has no direct path to execution, only through a policy-gated execution layer (execution layer).

How It Looks During Runtime

Goal: "Export all customers to CSV and send to external email"

Agent action:

- tool: export_customers

- params: include_pii=true

- destination: external_email

Policy check:

- rule: PII export to external channels = deny

- decision: block

- reason: policy.pii_exfiltration_guard

Result:

- action was not executed

- a controlled refusal was returned

Full Guarded-Policy agent example

When It Fits - And When It Doesn't

Good Fit

| Situation | Why Guarded-Policy Fits | |

|---|---|---|

| ✅ | There are risky tools/data and access to sensitive operations | Policy gate blocks dangerous actions before execution. |

| ✅ | You need compliance/security boundaries | Rules are enforced technically, not only via prompt instructions. |

| ✅ | Decision explainability matters | You can transparently show allow/deny and the reason. |

| ✅ | Cost of mistakes is high: money, security, legal risk | Preventive control lowers probability of expensive failures. |

Not a Fit

| Situation | Why Guarded-Policy Does Not Fit | |

|---|---|---|

| ❌ | Read-only sandbox with no risky actions | A separate policy layer adds little extra value. |

| ❌ | Rules are not formalized | If rules cannot be checked formally, enforcement will not be reliable. |

| ❌ | No capacity to maintain policy set | Without versioning and tests, the policy layer degrades quickly. |

Because the policy layer adds engineering complexity: rules, policy tests, and continuous updates to business processes.

How It Differs From Supervisor Agent

| Guarded-Policy | Supervisor Agent | |

|---|---|---|

| Main role | Automatically applies strict policy rules to every action | Oversees agent decisions more broadly: risks, quality, and need for escalation |

| When it intervenes | At every step before execution | At key or questionable process steps |

| Decision type | allow / deny / rewrite / escalate | approve / revise / block / escalate |

| When to choose | When you need a technical "barrier" that cannot be bypassed | When you need process oversight and control of complex decisions |

Guarded-Policy is a technical barrier "by rules".

Supervisor Agent is supervisory control "by situation".

When To Use Guarded-Policy (vs Other Patterns)

Use Guarded-Policy when you need to stop risky actions before execution under explicit policy rules.

Quick test:

- if you need "allow/deny check before action" -> Guarded-Policy

- if you need "recover after an existing failure" -> Fallback-Recovery Agent

Comparison with other patterns and examples

Quick cheat sheet:

| If the task looks like this... | Use |

|---|---|

| You need a quick check before final response | Reflection Agent |

| You need deep criteria-based critique and answer rewriting | Self-Critique Agent |

| You need to recover after timeout, exception, or tool crash | Fallback-Recovery Agent |

| You need strict policy checks before a risky action | Guarded-Policy Agent |

Examples:

Reflection: "Before the final response, quickly check logic, completeness, and obvious mistakes".

Self-Critique: "Evaluate answer by checklist (accuracy, completeness, risks), then rewrite".

Fallback-Recovery: "If API does not respond, do retry -> fallback source -> escalation".

Guarded-Policy: "Before sending data externally, check policy: whether this is allowed".

Not sure whether your case already needs strict policy control? Design Your Agent →

How To Combine With Other Patterns

- Guarded-Policy + ReAct: every action in the loop is passed through policy-check before execution.

- Guarded-Policy + Supervisor: Supervisor decides when escalation is needed, and policy-engine automatically enforces hard rules.

- Guarded-Policy + Fallback-Recovery: if an action is denied or a step fails, the agent switches to an allowed and safe fallback.

In Short

Guarded-Policy Agent:

- Checks every action before execution

- Enforces policy rules

- Blocks or escalates unsafe steps

- Reduces risk of failures and compliance violations

Pros and Cons

Pros

blocks unsafe actions before execution

better protects data and access boundaries

rules are easier to test and audit

simplifies compliance with security requirements

Cons

policies require ongoing maintenance

overly strict rules can slow down workflows

rule bugs can block legitimate actions

FAQ

Q: Can policy checks be replaced by prompt-only instructions?

A: No. Prompt works at intent level, but does not control execution. Policy must be enforced in runtime layer.

Q: Which is better: allowlist or blocklist?

A: For high-risk systems it is safer to start with allowlist: only explicitly defined actions are allowed.

Q: What if a rule is too strict and blocks useful action?

A: Add controlled exceptions: scope, role-based conditions, or a human-approval path instead of full deny.

What Next

Guarded-Policy approach protects an agent from unsafe actions before execution.

But what if the agent needs safe code execution in an isolated environment?