Pattern Essence

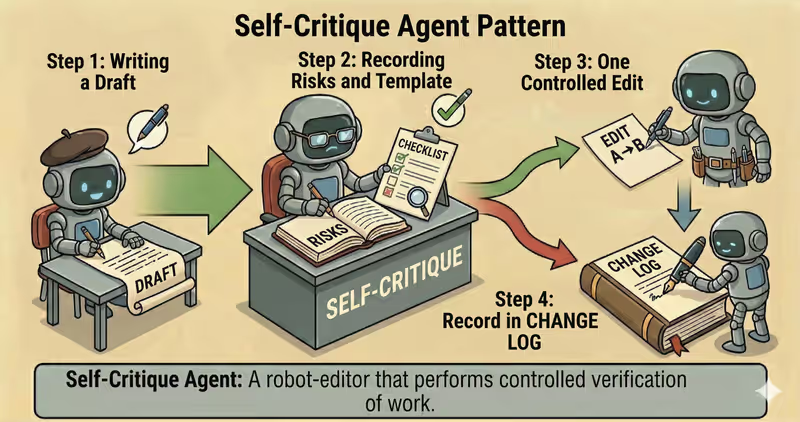

Self-Critique Agent is a pattern where the agent first writes a draft, then records risks and required changes using a fixed template, performs one constrained revision, and logs exactly what changed.

When to use it: when you need strict, structured risk review and controlled revision with an audit trail.

Compared to lightweight reflection, self-critique is usually stricter:

- critique has a fixed format (for example, JSON)

- edits are allowed only by rules

- text growth is controlled

- the system stores a change log (before → after)

Problem

Imagine an agent preparing a customer-facing issue update.

Draft:

"The release is delayed because of a failure in the payment module."

Then a request arrives:

"Make the wording softer."

Without a self-critique frame, the agent often does a "pretty rewrite" that goes beyond the task:

- adds new assumptions

- makes the tone less specific

- expands the text beyond the required scope

- hides the issue instead of clarifying it

"Improve wording" must not become "change meaning".

As a result:

- meaning shifts silently

- it is unclear what was actually fixed

- audit cannot reconstruct revision logic

- the response becomes less reliable for decision-making

That is the core problem: during "improvement," the agent can change meaning even though it should change only form.

Solution

Self-Critique introduces a rule: edit only what is listed in "required changes".

Analogy: it is like editorial revision from a list of comments. First we lock what exactly must be fixed, then we apply one controlled revision. This makes the response clearer without changing meaning.

Core principle: structured critique first, then one controlled revision with an audit trail.

The agent may propose rewrites, but the system allows only point edits that are explicitly listed in "required changes".

Controlled flow:

- Draft: generate the first version

- Critique: produce an artifact (

risks+required_changes) - Decision:

ok/revise/escalate - Revision: perform one constrained revision

- Audit: log

diff+ metadata

This gives you:

- clearer output without changing facts

- clear split between "what is wrong" and "what was fixed"

- reproducible revisions

- control over high-risk outputs before publishing

Works well if:

- critique uses a fixed structure (

schema-driven) - revision is limited to

required_changes no_new_factsis enforced- audit diff is mandatory

How It Works

For this pattern to be safe, you need strict boundaries:

- one critique pass

- one revision pass

- do not add new facts

- edit only items from required changes

- do not bloat the text (for example, +20% max)

- for high risk: stop or request human review

Full flow description: Draft → Critique → Revise → Audit

Draft

The agent generates the initial response.

Critique

A dedicated critique step returns structured output: risks, required changes, severity.

Revision

The agent changes only what is marked required, without expanding scope.

Audit

The system logs before/after, changed flag, and a short diff for debugging and incident analysis.

In Code, It Looks Like This

draft = writer.generate(goal, context)

critique = critic.review_once(

draft=draft,

schema="risks_required_changes_v1",

)

if critique.high_risk:

return escalate_to_human(critique.reason)

if critique.ok:

return draft

revised = writer.revise_once(

draft=draft,

required_changes=critique.required_changes,

rules=[

"no_new_facts",

"max_length_increase_pct=20",

"keep_scope",

],

)

approved = supervisor.review_output_patch(

original=draft,

revised=revised,

allowed_changes=critique.required_changes,

)

audit.log_diff(

before=draft,

after=approved,

risks=critique.risks,

)

return approved

Self-Critique should not run "until perfect." One critique + one revision (revise), and a check that revision stayed within required_changes.

How It Looks at Runtime

Goal: propose a safe action plan during a network incident

Draft:

"The issue was caused by the network. We should restart the entire cluster."

Critique:

- risk: claim about root cause without evidence

- risk: action has too wide blast radius (state changes)

- required_change: add checks before restart

Revision:

"A likely cause is a network failure.

Before restarting the cluster, verify node health and latency.

If a partial outage is confirmed, restart only affected nodes."

Full Self-Critique agent example

When It Fits - And When It Doesn't

Good Fit

| Situation | Why Self-Critique Fits | |

|---|---|---|

| ✅ | High-risk output before sending | Self-critique adds structured control before final release of the response. |

| ✅ | You need an audit trail of edits | Critique and edits are explicit and suitable for audit. |

| ✅ | You have a strict critique schema | A formal structure makes review reproducible and controllable. |

| ✅ | You need controlled rewriting without output bloat | Self-critique keeps rewriting limited to necessary changes only. |

Not a Fit

| Situation | Why Self-Critique Does Not Fit | |

|---|---|---|

| ❌ | Latency is critical and there is no budget for an extra pass | A second generation step can be too expensive in both time and cost. |

| ❌ | You cannot enforce hard rules such as no_new_facts | Without strict constraints, critique/rewriting can reduce reliability. |

| ❌ | The task is deterministic and consistently validated by tests | An extra critique pass duplicates an already reliable validation process. |

Because self-critique adds a second generation step and increases run cost.

How It Differs From Reflection

| Reflection | Self-Critique | |

|---|---|---|

| Review depth | Quick "is this okay" check (light review) | Strictly records "what is wrong" and "what must be fixed" |

| Format | ok/issues/fix | risks, severity, required changes |

| Revision | Usually minimal | Constrained rewrite under explicit rules |

| Operational focus | Fast quality pass | Controlled rewriting + change log |

Reflection is more often used as a lightweight filter before sending. Self-Critique is for stricter control over changes.

When To Use Self-Critique (vs Other Patterns)

Use Self-Critique when you need deep critique against explicit criteria and controlled rewriting.

Quick test:

- if you need to "evaluate by checklist and rewrite the answer" -> Self-Critique

- if you need to "do only a short pre-send check" -> Reflection Agent

Comparison with other patterns and examples

Quick cheat sheet:

| If the task looks like this... | Use |

|---|---|

| You need a quick check before the final response | Reflection Agent |

| You need deep criteria-based critique and answer rewriting | Self-Critique Agent |

| You need to recover after timeout, exception, or tool failure | Fallback-Recovery Agent |

| You need strict policy checks before risky actions | Guarded-Policy Agent |

Examples:

Reflection: "Before the final response, quickly check logic, completeness, and obvious mistakes."

Self-Critique: "Evaluate the answer by checklist (accuracy, completeness, risks), then rewrite."

Fallback-Recovery: "If the API does not respond, do retry -> fallback source -> escalate."

Guarded-Policy: "Before sending data externally, run policy checks to confirm it is allowed."

Not sure whether you need a full Self-Critique cycle instead of only Reflection? Design Your Agent →

How To Combine With Other Patterns

- Self-Critique + RAG: critique and revisions are allowed only within facts from retrieval context.

- Self-Critique + Supervisor: risky edits are not auto-applied and are routed for human approval.

- Self-Critique + Reflection: Reflection does a quick pre-check, and Self-Critique turns on for complex or disputed answers.

In Short

Self-Critique Agent:

- Creates a clear list of issues and required changes

- Applies one controlled revision

- Stores a change log (before → after)

- Prevents "text improvement" from silently changing meaning

Pros and Cons

Pros

reviews the answer before final output

reduces obvious mistakes

improves clarity of wording

helps enforce task requirements

Cons

adds an extra step and latency

does not replace fact verification

without clear criteria, it can over-edit

FAQ

Q: Can we run multiple critique passes?

A: Technically yes, but it quickly turns into an expensive loop. Safe default baseline: 1 + 1.

Q: Why is change logging mandatory?

A: Without a diff, it is hard to understand what changed quality and where errors appeared.

Q: Do we need a separate critic model?

A: Sometimes, but not required. More important is strict schema, output validation, and hard revision rules.

What Next

Self-Critique improves quality through controlled rewriting.

But what should you do when the answer must not only be rewritten, but checked against policy before a risky action?