When an agent works well, it looks like magic.

You give it a task, explain nothing, do not control every step, and the system finds data, picks tools, and returns with a result.

At some point, it starts to feel like it understands what it is doing.

And then suddenly:

- It uses the wrong tool

- It forgets half the task

- It makes an unnecessary API request

- Or confidently returns complete nonsense

And the worst part: it does it very convincingly.

No language mistakes.

No hesitation.

As if everything is correct.

At that moment, a logical question appears:

How could a system that just acted intelligently make such a mistake?

Because inside, there is no reasoning

To understand why an agent sometimes makes mistakes, we need to look at how it actually makes decisions.

Because inside it, there is no "reasoning mind".

An AI agent does not think. It does not check facts. It does not understand what is right and what is wrong.

It works through a language model.

And a language model does not know answers.

It does not seek truth. It does not open a knowledge base. It does not verify reality.

It picks the next action or response that looks most probable in the current context.

Every time the agent:

- Decides the next step

- Picks a tool

- Writes a request

- Or evaluates a result

— it is only trying to guess what looks most correct in this context.

And sometimes that guess is wrong.

| Human | LLM | |

|---|---|---|

| Checks facts | ✅ | ❌ |

| Knows the answer | Sometimes | ❌ |

| Predicts the answer | ❌ | ✅ |

How the agent actually makes decisions

The agent does not know which action is correct.

But it is trained on a huge number of examples of what a correct action looks like in similar situations.

During training, the model saw:

- What API requests look like

- How data is analyzed

- How reports are formed

- How tools are used

And now, when the agent works, it looks at:

- The goal

- Previous steps

- Received results

And asks itself:

"Which action looks most similar to one that helped in a similar situation before?"

For example, when things go well:

The agent gets a task: "Collect customer data."

The context has a tool: get_user_data(user_id)

The model "knows" that in similar tasks:

- First, data is retrieved

- Then, it is analyzed

So it chooses to call get_user_data

Not because it is certain.

But because it looks like the next logical step.

But here is when things go wrong:

The agent gets a task: "Write a short company overview for a new client."

The context contains old meeting notes for a different client, in a similar format.

The model sees a similar pattern and thinks:

"In a similar situation, people usually use already available data."

So it generates an overview, but for the wrong company.

Confidently. Without warnings. With cleanly formatted text.

Just not about the right client.

After each step, it predicts again:

"What do people usually do after this?"

And so on, step by step.

That is why an agent often takes correct actions.

But sometimes, not.

Because it does not check whether it is true.

It only selects what looks most appropriate in this context.

In code this looks like

Below is the same principle in a simple format:

the model does not "know truth," it picks the step that looks most probable in the current context.

First we have actions (tools) that can be called:

def fetch_company_profile(company_id: str):

return {"company_id": company_id, "summary": "Official profile"}

def summarize_notes(notes: str):

return {"summary": f"Summary from notes: {notes}"}

TOOLS = {

"fetch_company_profile": fetch_company_profile,

"summarize_notes": summarize_notes,

}

Now we have task context:

state = {

"task_company_id": "acme",

"old_notes": "Meeting notes about beta-corp", # old notes for another company

}

The model makes a guess about the next step:

def choose_action(state: dict):

# If notes already exist in context, the model may decide

# this is "enough" for a quick overview.

if state.get("old_notes"):

return {

"tool": "summarize_notes",

"parameters": {"notes": state["old_notes"]},

}

return {

"tool": "fetch_company_profile",

"parameters": {"company_id": state["task_company_id"]},

}

The system executes what the model proposed:

model_output = choose_action(state)

tool_name = model_output["tool"]

params = model_output["parameters"]

result = TOOLS[tool_name](**params)

In this case, the result will be "convincing," but about the wrong company:

# {'summary': 'Summary from notes: Meeting notes about beta-corp'}

To reduce risk, we add a simple source check before the final response:

def validate_summary_source(state: dict, result: dict):

if "beta-corp" in result.get("summary", "") and state["task_company_id"] == "acme":

return {"error": "Context mismatch: data is about the wrong company"}

return {"ok": True}

This does not remove LLM limits completely, but it reduces this class of production mistakes.

Full implementation example with connected LLM

Analogy from everyday life

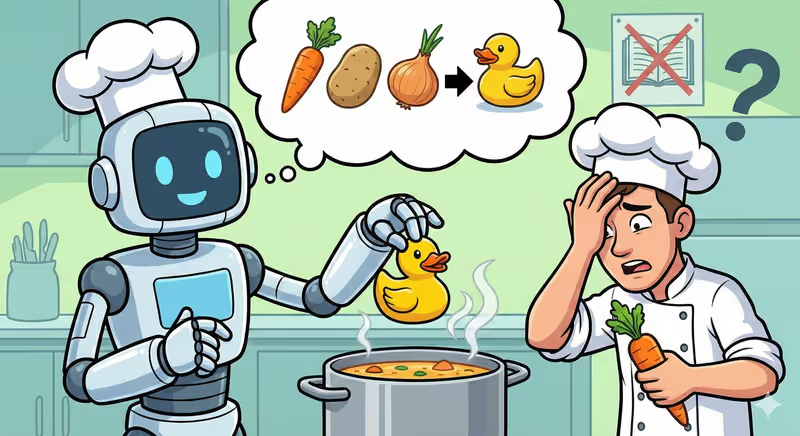

Imagine a beginner cook who watched thousands of recipe videos.

They have seen:

- How to fry meat

- How to cook soup

- How to make sauces

- How to plate dishes

But they do not remember a single recipe word for word.

And they do not know for sure how to cook every dish correctly.

Now you ask them: "Cook something similar to carbonara."

They do not open a book. They do not check instructions.

They think:

"What do people usually do in a similar dish?"

And:

- Add cream

- Fry bacon

- Mix with pasta

Sometimes it turns out very good.

Sometimes, strange.

Because they do not know the correct way.

They just do what looks most similar to a correct recipe they saw before.

An agent works the same way.

It does not know which action is correct. And it does not remember ready-made solutions.

It chooses the one that looks most like the correct one in a similar situation.

In short

A language model is not a reasoning mind:

- It does not know correct answers

- It does not check facts

- It only generates the most probable continuation

That is why an agent can look very smart and at the same time make confident mistakes with no warning at all.

This is not a bug in one specific system. It is the fundamental nature of how language models work.

What to do about it? Understanding this limit is already the first step. Next come concrete ways to make an agent more reliable: provide clear context, limit tools, add result validation. And one of the most important is to give it memory.

FAQ

Q: Does an agent know its answer is correct?

A: No. It selects the option that looks most probable in this context.

Q: Can the model check itself?

A: It can try to evaluate its answer, but that will still be a guess.

Q: Why does an agent sound confident even when it is wrong?

A: Because the model is trained to generate plausible text, not to doubt itself.

What’s next

Now you know why an agent can make mistakes and that this comes not from negligence, but from the nature of the technology itself.

There are several ways to make an agent more reliable: clear instructions, a limited set of tools, result validation. But one of the most effective and most interesting is memory.

If an agent can remember:

- What has already been done

- Which tools worked

- Which data it received earlier

It starts relying less on guesses and more on concrete experience in the current task.

That is exactly what the next article is about.